Introduction

I’ve worked in three different companies now as a tester and I’ve read, written and executed a lot of different types and styles of test case. My time especially at a large corporation working with large numbers of test cases written by a large number of different people really gave me some varied experience with them.

Not only that, but these three companies had different approaches to their processes and testing reflected that. I’ve worked with gigantic test suites of thousands of test cases, projects where the test cases were a single spreadsheet and projects where I tested with no test cases at all.

Which then begs the question, do we need test cases? Is there such a thing as too many? Or are there not enough test cases?

The realisation

I once asked my testing team this question - what do you find test cases useful for? Some of the answers I got back were something like this:

“To make sure we check everything”

“To work out what to test”

“To help us learn other areas of the system we haven’t tested before”

“To have confidence we have tested everything and not forgotten anything”

There’s a common theme here, the realisation that test cases are just a form of documentation. Documentation of what you are testing and the kinds of tests you want to run. Not only that, but as testers we use test cases to assist us in learning the system and designing our tests. In other words, we write out test cases in order to figure out what tests we want to run.

So if we’re not even designing our tests before we write them, then how can we hope to write them to a good standard? Are we even thinking about writing them to a standard? Can all tests fit any particular standard?

By having this documentation and putting a tick next to each test, testers also find confidence that they have thoroughly tested the system. So if test cases are a form of documentation….

Do we need documentation?

I think any tester would answer this with a yes. Without documentation, you are wholly reliant on memory and what people tell you. Documentation almost always exists somewhere - even if it’s not “formal” documentation (e.g. a written document, diagram or perhaps a wiki), it might be just an email, a set of requirements or your notes observing the behaviour of the system. Technically, the code of a program is the ultimate form of documentation - it’s just it might not be very easy to read! Documentation is a way of articulating information in a more easily understandable way, and as testers we want to understand as much as possible about the system we are testing. So having easily understandable documentation is very valuable to us.

So, we’ve established we do need documentation, and we’ve established that test cases are only one form of documentation. Maybe then the question to ask is…

Are test cases always the right kind of documentation?

Documentation is a way of articulating information, so the way we produce documentation influences how well people understand it. There is also a cost to documentation, it can never be done in zero time and it takes skill to create documentation that is well written and understandable to a given audience.

Just as there are good authors and bad authors, documentation is a creative art.

My experience at the large corporation and my experience of the wider testing industry is that there appears to be a general understanding that test cases can be written by anybody and therefore read and executed by anybody. Some note that there is a skill to this, but the focus is on the production of test cases, rather than the quality of said test cases. In other words, the idea that you could produce good testing without test cases seemed like a massive risk.

However, this desire for test cases and fear of a lack of them drives people to create test cases where it may not be useful. Most test cases I’ve seen follow the following format:

Summary

Preconditions

Steps

Expected Result

Now, given a very simple piece of software that takes in value X, determines if X > 5 and then does Y or Z depending on this, we might write some test cases for this that look like this:

This is a very simplistic example, but take note of how much I’ve had to write here to fit this format and how long it takes you to read it. Maybe it takes me a few minutes to write these sections, fix any spelling mistakes and review it to make sure it makes sense.

Now, consider if I documented the same example as a diagram:

How long did it take me to draw this? About 30 seconds in a decent flowchart program (such as yEd). I had fewer errors to correct and this particular example mapped very easily into a flowchart. How long did it take you to understand this diagram? How long did it take you to read the test cases? Which was most effective at helping you understand the behaviour? How long would it take you to think of some test ideas?

Hang on, where can I document my tests?

So we’ve not satisfied the desire for some documentation of our tests here. This diagram only satisfies the need for an explanation of the system. Test cases outline specific tests we may want to run. So, maybe we still need test cases and we just need a few diagrams to help explain more complex areas? Is there any other solution?

Enter...mind maps

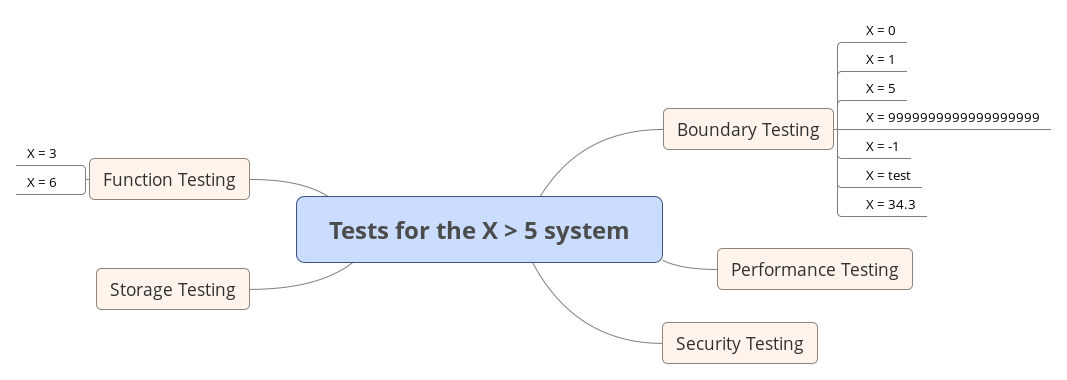

So I did a little digging and looking around, I felt that surely there are better ways to design tests. Test cases can feel very slow to write, slow to read and hard to keep written in a particular format. This is when I came across mind maps described in this blog by Darren McMillan. A mind map is not too different from a brainstorm - simply start with a topic and begin branching ideas from it. Taking my example above, I might end up with this mind map (created in a great tool called XMind found here):

Here I’ve explored some test ideas and started to design my tests. Exploring these ideas may raise questions that I don’t know the answer to right now - is storage a concern? If it is, how does it get stored, maybe security is a concern if the underlying system is using a MySQL database, maybe I need to test for SQL injection? Maybe performance needs to be considered? I can also show this to other people and they can quickly understand the scope of my testing without having to read individual test cases - and I can quickly observe the scope of my own testing and keep adding to it. It’s much easier to consider the overall picture of my test plan using this diagram.

But isn’t it difficult to draw diagrams and fit things in?

Yes, I don’t think mind maps are a replacement for test cases. Instead, I think this is a tool that can be used in conjunction with test cases to help you design and document tests in a more readable format, quicker. However, there are still cases where it can be difficult or time-consuming to create a mind map or diagram. I envisage that you may start with a flowchart and formal documentation of a system first, to understand the system you are testing. You would then create a mind map to explore what you want to test around this system. Finally, you would perhaps write test cases to formally document your tests, especially where they have complex steps.

So are there any quick, easy solutions to design and document all tests?

No, I don’t think so. I think by the nature of having to design, write or draw tests, they can never be created in zero time. Some systems or tests will be complex and you cannot run away from the complexity - drawing a diagram or writing test cases may be a way of reducing or making the complexity easier to understand, but sometimes it’s not possible to simplify it.

In the process of thinking about and writing this blog post, I think I’ve come to the conclusion that the problem here is not that we don’t need test cases. The problem is that we are not always using the right tool for the job and sometimes as testers we aren’t thinking carefully about the format we want to write or convey our tests and documentation. Using flowcharts and mind maps allow us more tools for this purpose, and they definitely are not the only forms of diagram or documentation we can use!

But what if I want to collate my tests and re-visit them for regression?

I think this is the crux of why test cases become relied upon so much. Why is it useful to re-visit test cases? Is it because you don’t want to miss an important test that you might have forgotten? I would argue that if we are repeating an important test regularly, we’re unlikely to forget it and simply because it is in a test case format doesn’t mean its importance is always highlighted - which means you’re either regularly running a lot of test cases “just in case” or potentially missing these tests anyway.

Wouldn’t it be better to document a system in a way that conveys information - highlighting important areas, rather than trying to fit all such information in a test case format?

That nagging feeling…

I still feel dissatisfied with my conclusions, I still have concerns that test cases and diagrams still require a lot of skill to write or draw in a way that is easily understandable by others. Every one of us will write or draw things differently and this makes it difficult to be consistent. The need to set standards, train people and conduct reviews still feels like it’s necessary.

However, I definitely do feel that in my career so far as a tester and in my conversations with other testers, there has definitely been too large a reliance on writing test cases over other forms and styles of documentation. I do believe that as testers we can definitely save some time and improve the quality of our testing by considering other techniques and not relying on test cases.

But I still feel there could be a better way!

Summary

Test cases are not always the most appropriate format to document tests.

Diagrams like flowcharts can be used to document and explain systems better than a test case. Rather than using test cases to learn a system, we could use diagrams or more formal documentation of the system - if this documentation doesn’t exist already, it’s worth creating it!

Mind maps can be used to assist in designing and documenting tests, rather than designing the tests as you write test cases. Mind maps provide better visibility of your overall test plan.

Writing test cases or drawing diagrams still requires skill - diagrams are not necessarily better than test cases all of the time and not necessarily easier to create to a consistent standard.